This tutorial will teach us to scrape Google Play Store data using Node JS with Unirest and Cheerio.

Let’s start scraping the Google Play Store

In this section, We will scrape the Google Play Store. However, we must also review the requirements of this project.

Web Parsing with CSS selectors

Searching the tags from the HTML files is not only a difficult thing to do but also a time-consuming process. It is better to use the CSS Selectors Gadget for selecting the perfect tags to make your web scraping journey easier.

This gadget can help you develop the perfect CSS selector for your needs. Here is the link to the tutorial, which will teach you to use this gadget for selecting the best CSS selectors according to your needs.

User Agents

User-Agent is used to identify the application, operating system, vendor, and version of the requesting user agent, which can save help in making a fake visit to Google by acting as a real user.

You can also rotate User Agents, read more about this in this article: How to fake and rotate User Agents using Python 3.

If you want to further safeguard your IP from being blocked by Google, you can try these 10 Tips to avoid getting Blocked while Scraping Google.

Install Libraries

To start scraping Google PlayStore Results we need to install some NPM libraries so that we can move forward.

So before starting, we have to ensure that we have set up our Node JS project and installed both the packages — Unirest JS and Cheerio JS. You can install both packages from the above link.

Process

Let’s Begin The Process Of Scraping The Google Play Apps Results. We Will Be Using Unirest JS To Extract The Raw HTML Data And Parse This Data With The Help Of Cheerio JS.

Note: We will not scrape the “Top Charts” results from Google Play as it is loaded by Javascript, which requires you to use Puppeteer JS to scrape these results, which is very CPU intensive and a slow method.

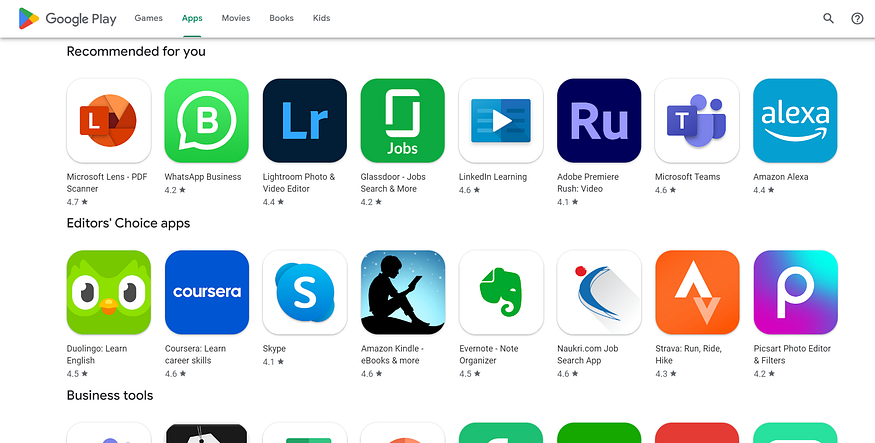

Open the below link in your browser, so we can start selecting the HTML tags for the required elements.

https://play.google.com/store/apps

Let us make a GET request using Unirest JS on the target URL.

const unirest = require("unirest");

const cheerio = require("cheerio");

const getGooglePlayData = async () => {

const url = "https://play.google.com/store/apps";

let head: {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/107.0.0.0 Safari/537.36"

}

const response = await unirest

.get(url)

.header(head)

const $ = cheerio.load(response.body);

Step-by-step explanation:

- In the first and second lines, we declared the constant for the Unirest and Cheerio libraries.

- In the next line, we declared a function to get the Google Play Data.

- After that, we declared a constant for the URL and a head object which consists of the User Agent.

- Next, we made the request on the URL with the help of Unirest.

- In the last line, we declared a Cheerio instance variable to load the response.

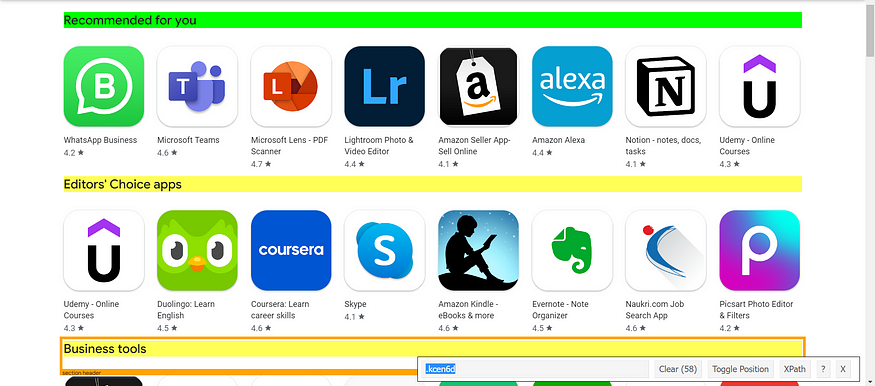

Now, we will prepare our parser by searching the tags with the help of the CSS selector gadget, as stated above in the Requirements section.

All the title tabs you saw above are inside an HTML tag .kcen6d. So, its parser will look like this:

let category = [];

$(".kcen6d").each((i,el) => {

category[i] = $(el).text()

})

We just declared an array for storing the titles in it. And then with the help of the Cheerio constant $, which we declared above, we parsed all the titles with the matching selector tag.

Now, we will scrape the Apps under these titles.

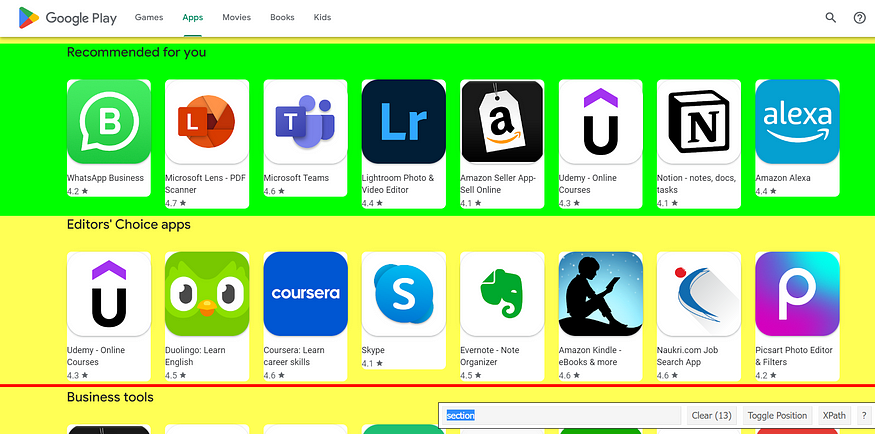

These apps are under the container called section here. So its parser would look like this:

let organic_results = [];

$("section").each((i,el) => {

let results = [];

if(category[i] !== "Top charts" && category[i] !== undefined)

{

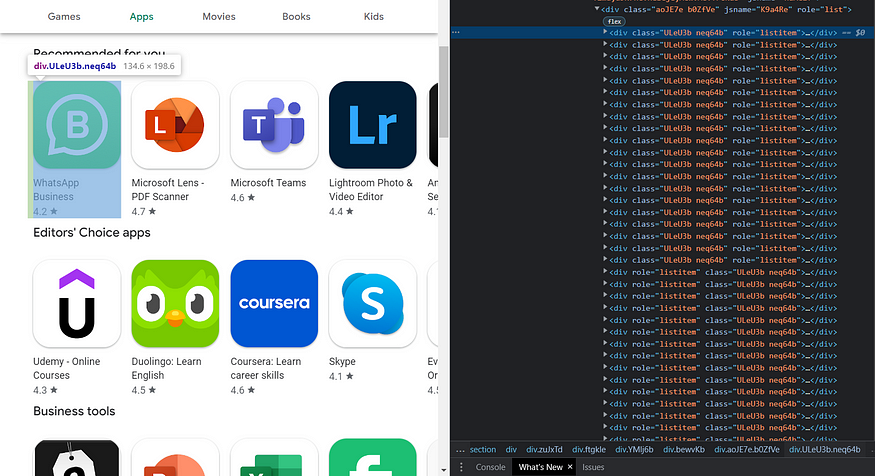

$(el).find(".ULeU3b").each((i,el) => {

results.push({

app_name: $(el).find(".Epkrse").text(),

rating: $(el).find(".LrNMN").text(),

thumbnail: $(el).find(".TjRVLb img").attr("src"),

link: $(el).attr("href")

})

})

organic_results.push({

category: category[i],

results: results

})

}

})

First, we selected the tag for the container as shown in the above image. Then we declared an array to store the App results inside it.

After that, we defined a condition that if an element in category the array consists of the title “Top Charts” and an element in the category array is undefined, then we will not do anything. If all the conditions are true, we select the tag for each App and loop over it to get the needed details and store it in the results array.

Here you can see which parent tag I selected for collecting the data:

After that, we stored the results and the current title in the category array in the new array named as organic_results.

Now, our results should look like this:

{

category: 'Recommended for you',

results: [

{

app_name: 'WhatsApp Messenger',

rating: 4.1,

thumbnail: 'https://play-lh.googleusercontent.com/bYtqbOcTYOlgc6gqZ2rwb8lptHuwlNE75zYJu6Bn076-hTmvd96HH-6v7S0YUAAJXoJN=s256-rw',

link: 'https://play.google.com/store/apps/details?id=com.whatsapp'

},

{

app_name: 'YouTube',

rating: 4.1,

thumbnail: 'https://play-lh.googleusercontent.com/lMoItBgdPPVDJsNOVtP26EKHePkwBg-PkuY9NOrc-fumRtTFP4XhpUNk_22syN4Datc=s256-rw',

link: 'https://play.google.com/store/apps/details?id=com.google.android.youtube'

},

{

app_name: 'Instagram',

rating: 4.3,

thumbnail: 'https://play-lh.googleusercontent.com/c2DcVsBUhJb3UlAGABHwafpuhstHwORpVwWZ0RvWY7NPrgdtT2o4JRhcyO49ehhUNRca=s256-rw',

link: 'https://play.google.com/store/apps/details?id=com.instagram.android'

},

.....

Here is the complete code:

const unirest = require("unirest");

const cheerio = require("cheerio");

const getGooglePlayData = async() => {

let url = "https://play.google.com/store/apps"

let response = await unirest

.get(url)

.headers({

"User-Agent":

"Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/104.0.0.0 Safari/537.36",

})

const $ = cheerio.load(response.body)

let category = [], organic_results = [];

$(".kcen6d").each((i,el) => {

category[i] = $(el).text()

})

$("section").each((i,el) => {

let results = [];

if(category[i] !== "Top charts" && category[i] !== undefined)

{

$(el).find(".ULeU3b").each((i,el) => {

results.push({

app_name: $(el).find(".Epkrse").text(),

rating: parseFloat($(el).find(".LrNMN").text()),

thumbnail: $(el).find(".TjRVLb img").attr("src"),

link: "https://play.google.com" + $(el).find("a").attr("href"),

})

})

organic_results.push({

category: category[i],

results: results

})

}

})

console.log(organic_results[0]);

};

getGooglePlayData();

Conclusion:

This tutorial taught us to scrape Google Play Store Results with Node JS. Feel free to message me if I missed something. Follow me on Twitter. Thanks for reading!